10 SaaS Marketing Metrics to Track and Why (2026)

The essential SaaS marketing metrics with formulas, stage benchmarks, and practical guidance on CAC, LTV, MRR, churn, NRR, and marketing attribution.

You have 50+ published articles. Most won't get cited by AI this week — not because the content is bad, but because it's structured for humans scanning, not AI extracting. Here's how to fix that.

Optimizing content for AI search means restructuring how you present answers — leading with complete responses, adding structured formats AI can extract, and building citation surface area across multiple platforms.

You probably have 50+ published articles. Most won't get cited by ChatGPT this week — not because it's structured for humans scanning, not AI extracting.

The five steps below walk through exactly what to change, in what order, and how long each step takes. A solo content manager can run through a full content library audit in 2–3 days.

What this covers: the structural gap between Google-optimized content and AI-cited content, a five-step optimization process you can run on your existing library, and how to prioritize so you're working on the pages that will move the needle.

---

The gap between content that ranks on Google and content that gets cited by AI is structural — not about quality, authority, or topic coverage.

Here's the core problem: AI uses RAG (Retrieval Augmented Generation) to pull content in 40–60 word chunks from the opening of each section. If the answer isn't in the first sentence, the chunk it grabs is unciteable — the AI literally cannot use it to answer the question clearly.

Research published by Ahrefs in 2025 found that only 11% of domains are cited by both ChatGPT and Perplexity. Cross-platform citation is rare. But the gap isn't explained by domain authority — it's explained by structure. A DR 30 page with BLUF-structured sections outperforms a DR 80 page with warm-up-heavy prose.

Separately, a 2024 study found that 76% of Google AI Overview citations come from pages already in the top 10 — so your SEO foundation still matters for Google specifically. For ChatGPT and Perplexity, content structure matters more than ranking position.

Your existing content library is the raw material. The AI content optimization work is structural.

---

The most valuable first move is also the fastest: test whether each H2 section in your top articles can stand alone as a complete answer.

The test: pull any section out of context. Read just that section. Does the first sentence answer the section's question completely, without requiring the reader to have read anything before it?

What passes: "Answer Engine Optimization (AEO) is the practice of structuring content so AI systems can extract and cite your brand in generated answers."

What fails: "Before we get into the tactics, it's worth understanding how AI search has evolved over the past year."

Apply this to your top 10 pages by traffic. Run it on every H2 section. Flag every section that fails. That list is your content optimization checklist — your AI content optimization queue.

Time investment: 2–3 hours to audit 10 pages. Most content managers are surprised by how many sections fail when they do this honestly.

---

Once you've identified sections that bury the answer, the fix is consistent: move the complete answer to sentence one of every section, then add context and elaboration after.

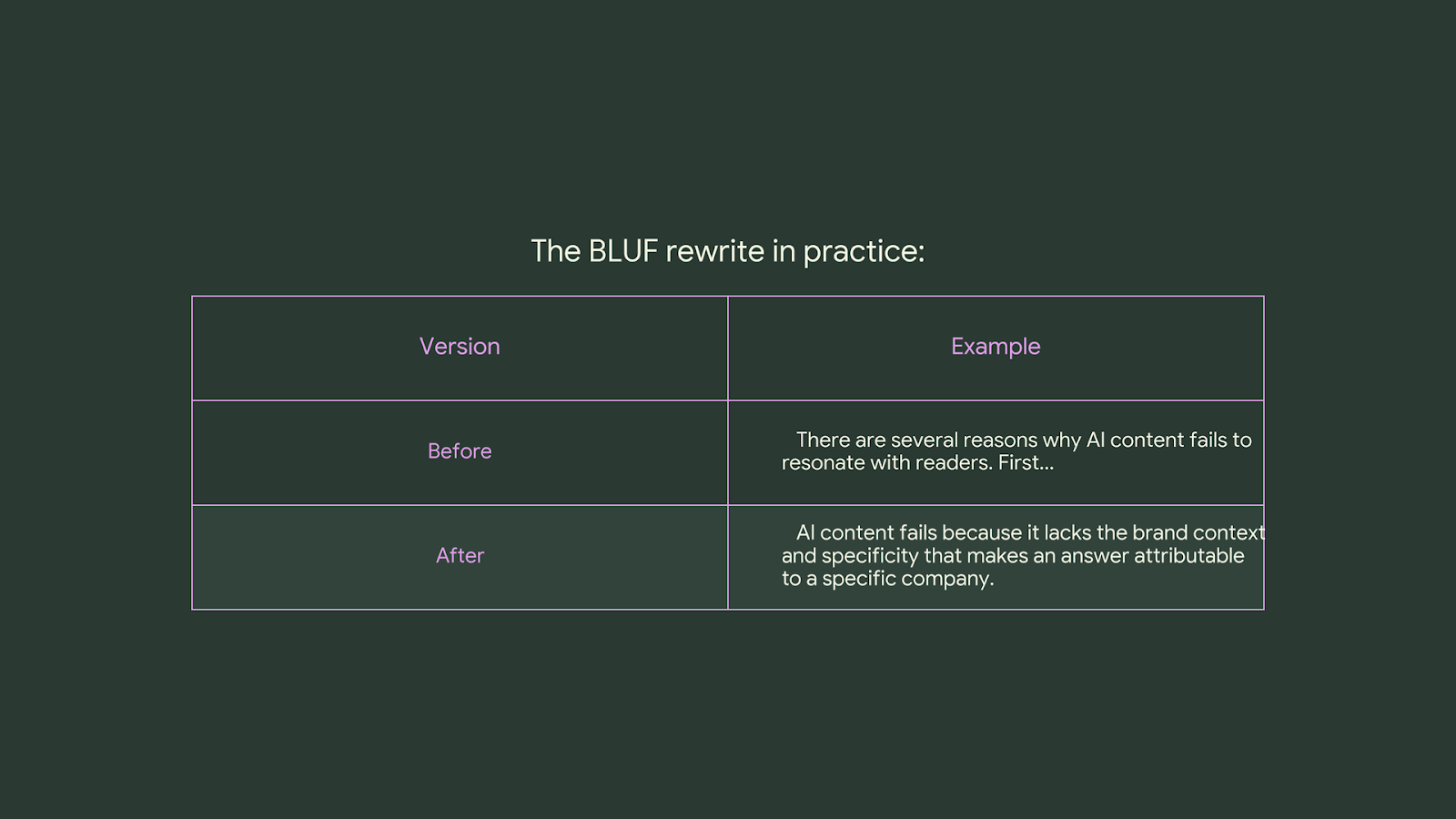

The BLUF rewrite in practice:

The before version warms up to the point. The after version states the point immediately, then earns elaboration. The AI extracts the first sentence — and if that sentence is useful, it becomes a citation candidate.

How to do this at scale: paste the section into Claude or ChatGPT with this instruction: "Rewrite this section so the first sentence answers the section question completely. Preserve all supporting details, examples, and internal links." Review the output. Keep what's right; adjust what isn't.

Time per page: 20–30 minutes for an experienced editor working through this methodically. That's 10 articles in a full day.

---

Comparison tables, numbered lists, and definition blocks are the highest-citation formats in AI-generated answers — add at least one structured element to every article in your content optimization queue.

This is not opinion. Profound analyzed 2.6 billion AI response data points and found that listicle-format content — structured lists, comparison tables, numbered sequences — accounts for 25.37% of all AI citations. Dense prose, however well-written, extracts poorly.

Three formats to add to every article:

Time per article: 15–20 minutes to add one structured element. Start with comparison tables — they have the highest visual impact and the clearest extraction pattern.

---

A properly structured FAQ section captures People Also Ask queries, gets cited in AI answers, and covers keyword variants — all from a single section you can add to any existing article in under an hour.

How to find the right questions: search your primary keyword in Google, screenshot the PAA box, and use those exact phrasings as your FAQ questions. Supplement with the Semrush Questions report and Ahrefs' "Also rank for" keyword data. You want questions phrased the way a person would actually ask them, not the way you'd write a header.

FAQ structure rules:

FAQPage schema: add it. Yoast and Rank Math both have FAQ blocks that apply schema automatically. This is the format most likely to appear in both Google PAA boxes and AI citations simultaneously.

How many per article: 5–7 for a pillar page, 3–5 for a supporting page.

---

Getting cited by AI search is not just about your own content. If only things were that easy. AI systems prefer brands mentioned across multiple independent sources, which means Reddit threads, YouTube videos, and third-party listicles all count.

AI systems treat multi-source frequency as a trust signal. One page on your domain = one data point. Five independent sources mentioning your brand in the same context = consensus. Consensus is what gets cited.

Three platforms to prioritize:

Track which queries you're currently getting cited for — and which ones you're absent from — using CheckThat.

---

Prioritize your AI content optimization queue by targeting the 20% of pages that drive 80% of your traffic and are closest to being AI-citation-ready. Think: definition pages, how-to guides, and comparison content first.

Prioritization framework:

How to build the queue: pull your top 20 pages from GA4, run the standalone test on each one, and rank by estimated effort vs. traffic value. The pages closest to passing the standalone test with the highest traffic are your first target.

Time estimate: 30 articles fully optimized in 2–3 days for a solo content manager running this systematically.

Maintenance: add a quarterly calendar event to run the standalone test on new published content. The system only holds if new content gets the same structural treatment.

---

See how your brand currently shows up in ChatGPT, Perplexity, and Google AI Mode — before your competitors figure out what you're ranking for. Try CheckThat free

Every week, we share real examples and systems the fastest-growing companies are using to scale smarter.

Get the last workshop recording when you sign up.

The essential SaaS marketing metrics with formulas, stage benchmarks, and practical guidance on CAC, LTV, MRR, churn, NRR, and marketing attribution.

Ten structured AI prompt frameworks that produce specific, actionable market research output — from customer segment analysis and sentiment mapping to A/B testing hypothesis generation.

Most teams are running a collection of prompts. What they need is a four-layer system that connects context, research, drafting, and quality control into something repeatable.